J u m p t o c o n t e n t

M a i n m e n u

M a i n m e n u

N a v i g a t i o n

● M a i n p a g e ● C o n t e n t s ● C u r r e n t e v e n t s ● R a n d o m a r t i c l e ● A b o u t W i k i p e d i a ● C o n t a c t u s ● D o n a t e

C o n t r i b u t e

● H e l p ● L e a r n t o e d i t ● C o m m u n i t y p o r t a l ● R e c e n t c h a n g e s ● U p l o a d f i l e

S e a r c h

Search

A p p e a r a n c e

● C r e a t e a c c o u n t ● L o g i n

P e r s o n a l t o o l s

● C r e a t e a c c o u n t ● L o g i n

P a g e s f o r l o g g e d o u t e d i t o r s l e a r n m o r e ● C o n t r i b u t i o n s ● T a l k

( T o p )

1 D e f i n i t i o n

2 S p e c i a l c a s e s

3 P r o p e r t i e s

T o g g l e P r o p e r t i e s s u b s e c t i o n

3 . 1 G e n e r a l i z e d m e a n i n e q u a l i t y

4 P r o o f o f t h e w e i g h t e d i n e q u a l i t y

T o g g l e P r o o f o f t h e w e i g h t e d i n e q u a l i t y s u b s e c t i o n

4 . 1 E q u i v a l e n c e o f i n e q u a l i t i e s b e t w e e n m e a n s o f o p p o s i t e s i g n s

4 . 2 G e o m e t r i c m e a n

4 . 3 I n e q u a l i t y b e t w e e n a n y t w o p o w e r m e a n s

5 G e n e r a l i z e d f - m e a n

6 A p p l i c a t i o n s

T o g g l e A p p l i c a t i o n s s u b s e c t i o n

6 . 1 S i g n a l p r o c e s s i n g

7 S e e a l s o

8 N o t e s

9 R e f e r e n c e s

10 F u r t h e r r e a d i n g

11 E x t e r n a l l i n k s

T o g g l e t h e t a b l e o f c o n t e n t s

G e n e r a l i z e d m e a n

2 2 l a n g u a g e s

● Б ъ л г а р с к и ● D a n s k ● D e u t s c h ● E s p a ñ o l ● E u s k a r a ● ف ا ر س ی ● F r a n ç a i s ● G a l e g o ● 한 국 어 ● ह ि न ् द ी ● ע ב ר י ת ● M a g y a r ● N e d e r l a n d s ● 日 本 語 ● P o l s k i ● Р у с с к и й ● С р п с к и / s r p s k i ● S v e n s k a ● த ம ி ழ ் ● T ü r k ç e ● У к р а ї н с ь к а ● 中 文

E d i t l i n k s

● A r t i c l e ● T a l k

E n g l i s h

● R e a d ● E d i t ● V i e w h i s t o r y

T o o l s

T o o l s

A c t i o n s

● R e a d ● E d i t ● V i e w h i s t o r y

G e n e r a l

● W h a t l i n k s h e r e ● R e l a t e d c h a n g e s ● U p l o a d f i l e ● S p e c i a l p a g e s ● P e r m a n e n t l i n k ● P a g e i n f o r m a t i o n ● C i t e t h i s p a g e ● G e t s h o r t e n e d U R L ● D o w n l o a d Q R c o d e ● W i k i d a t a i t e m

P r i n t / e x p o r t

● D o w n l o a d a s P D F ● P r i n t a b l e v e r s i o n

A p p e a r a n c e

F r o m W i k i p e d i a , t h e f r e e e n c y c l o p e d i a

( R e d i r e c t e d f r o m G e n e r a l i s e d m e a n )

N-th root of the arithmetic mean of the given numbers raised to the power n

Plot of several generalized means

M

p

(

1 ,

x )

{\displaystyle M_{p}(1,x)}

In mathematics , generalized means (or power mean or Hölder mean from Otto Hölder )[1] Pythagorean means (arithmetic , geometric , and harmonic means ).

Definition

[ edit ]

If p real number , and

x

1

,

…

,

x

n

{\displaystyle x_{1},\dots ,x_{n}}

generalized mean or power mean with exponent p [2] [3]

M

p

(

x

1

,

…

,

x

n

)

=

(

1 n

∑

i =

1

n

x

i

p

)

1

/

p

.

{\displaystyle M_{p}(x_{1},\dots ,x_{n})=\left({\frac {1}{n}}\sum _{i=1}^{n}x_{i}^{p}\right)^{{1}/{p}}.}

(See p p

M

0

(

x

1

,

…

,

x

n

)

=

(

∏

i =

1

n

x

i

)

1

/

n

.

{\displaystyle M_{0}(x_{1},\dots ,x_{n})=\left(\prod _{i=1}^{n}x_{i}\right)^{1/n}.}

Furthermore, for a sequence of positive weights w i weighted power mean as [2]

M

p

(

x

1

,

…

,

x

n

)

=

(

∑

i =

1

n

w

i

x

i

p

∑

i =

1

n

w

i

)

1

/

p

{\displaystyle M_{p}(x_{1},\dots ,x_{n})=\left({\frac {\sum _{i=1}^{n}w_{i}x_{i}^{p}}{\sum _{i=1}^{n}w_{i}}}\right)^{{1}/{p}}}

p weighted geometric mean :

M

0

(

x

1

,

…

,

x

n

)

=

(

∏

i =

1

n

x

i

w

i

)

1

/

∑

i =

1

n

w

i

.

{\displaystyle M_{0}(x_{1},\dots ,x_{n})=\left(\prod _{i=1}^{n}x_{i}^{w_{i}}\right)^{1/\sum _{i=1}^{n}w_{i}}.}

The unweighted means correspond to setting all w i

Special cases

[ edit ]

A few particular values of p [4]

minimum

M

−

∞

(

x

1

,

…

,

x

n

)

=

lim

p →

−

∞

M

p

(

x

1

,

…

,

x

n

)

=

min

{

x

1

,

…

,

x

n

}

{\displaystyle M_{-\infty }(x_{1},\dots ,x_{n})=\lim _{p\to -\infty }M_{p}(x_{1},\dots ,x_{n})=\min\{x_{1},\dots ,x_{n}\}}

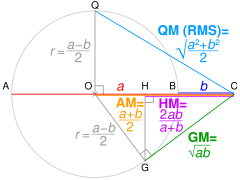

A visual depiction of some of the specified cases for n a x 1 M ∞ b x 2 M −∞

harmonic mean, H M −1 (a b

geometric mean, G M 0 (a b

arithmetic mean, A M 1 a b

quadratic mean, Q M 2 a b

harmonic mean

M

−

1

(

x

1

,

…

,

x

n

)

=

n

1

x

1

+

⋯

+

1

x

n

{\displaystyle M_{-1}(x_{1},\dots ,x_{n})={\frac {n}{{\frac {1}{x_{1}}}+\dots +{\frac {1}{x_{n}}}}}}

geometric mean

M

0

(

x

1

,

…

,

x

n

)

=

lim

p →

0

M

p

(

x

1

,

…

,

x

n

)

=

x

1

⋅

⋯

⋅

x

n

n

{\displaystyle M_{0}(x_{1},\dots ,x_{n})=\lim _{p\to 0}M_{p}(x_{1},\dots ,x_{n})={\sqrt[{n}]{x_{1}\cdot \dots \cdot x_{n}}}}

arithmetic mean

M

1

(

x

1

,

…

,

x

n

)

=

x

1

+

⋯

+

x

n

n

{\displaystyle M_{1}(x_{1},\dots ,x_{n})={\frac {x_{1}+\dots +x_{n}}{n}}}

root mean square [5] [6]

M

2

(

x

1

,

…

,

x

n

)

=

x

1

2

+

⋯

+

x

n

2

n

{\displaystyle M_{2}(x_{1},\dots ,x_{n})={\sqrt {\frac {x_{1}^{2}+\dots +x_{n}^{2}}{n}}}}

cubic mean

M

3

(

x

1

,

…

,

x

n

)

=

x

1

3

+

⋯

+

x

n

3

n

3

{\displaystyle M_{3}(x_{1},\dots ,x_{n})={\sqrt[{3}]{\frac {x_{1}^{3}+\dots +x_{n}^{3}}{n}}}}

maximum

M

+

∞

(

x

1

,

…

,

x

n

)

=

lim

p →

∞

M

p

(

x

1

,

…

,

x

n

)

=

max

{

x

1

,

…

,

x

n

}

{\displaystyle M_{+\infty }(x_{1},\dots ,x_{n})=\lim _{p\to \infty }M_{p}(x_{1},\dots ,x_{n})=\max\{x_{1},\dots ,x_{n}\}}

We can rewrite the definition of

M

p

{\displaystyle M_{p}}

M

p

(

x

1

,

…

,

x

n

)

=

exp

(

ln

[

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

]

)

=

exp

(

ln

(

∑

i =

1

n

w

i

x

i

p

)

p

)

{\displaystyle M_{p}(x_{1},\dots ,x_{n})=\exp {\left(\ln {\left[\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\right]}\right)}=\exp {\left({\frac {\ln {\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)}}{p}}\right)}}

In the limit p L'Hôpital's rule to the argument of the exponential function. We assume that

p ∈

R

{\displaystyle p\in \mathbb {R} }

p w i [7] p

lim

p →

0

ln

(

∑

i =

1

n

w

i

x

i

p

)

p

=

lim

p →

0

∑

i =

1

n

w

i

x

i

p

ln

x

i

∑

j =

1

n

w

j

x

j

p

1

=

lim

p →

0

∑

i =

1

n

w

i

x

i

p

ln

x

i

∑

j =

1

n

w

j

x

j

p

=

∑

i =

1

n

w

i

ln

x

i

∑

j =

1

n

w

j

=

∑

i =

1

n

w

i

ln

x

i

=

ln

(

∏

i =

1

n

x

i

w

i

)

{\displaystyle {\begin{aligned}\lim _{p\to 0}{\frac {\ln {\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)}}{p}}&=\lim _{p\to 0}{\frac {\frac {\sum _{i=1}^{n}w_{i}x_{i}^{p}\ln {x_{i}}}{\sum _{j=1}^{n}w_{j}x_{j}^{p}}}{1}}\\&=\lim _{p\to 0}{\frac {\sum _{i=1}^{n}w_{i}x_{i}^{p}\ln {x_{i}}}{\sum _{j=1}^{n}w_{j}x_{j}^{p}}}\\&={\frac {\sum _{i=1}^{n}w_{i}\ln {x_{i}}}{\sum _{j=1}^{n}w_{j}}}\\&=\sum _{i=1}^{n}w_{i}\ln {x_{i}}\\&=\ln {\left(\prod _{i=1}^{n}x_{i}^{w_{i}}\right)}\end{aligned}}}

By the continuity of the exponential function, we can substitute back into the above relation to obtain

lim

p →

0

M

p

(

x

1

,

…

,

x

n

)

=

exp

(

ln

(

∏

i =

1

n

x

i

w

i

)

)

=

∏

i =

1

n

x

i

w

i

=

M

0

(

x

1

,

…

,

x

n

)

{\displaystyle \lim _{p\to 0}M_{p}(x_{1},\dots ,x_{n})=\exp {\left(\ln {\left(\prod _{i=1}^{n}x_{i}^{w_{i}}\right)}\right)}=\prod _{i=1}^{n}x_{i}^{w_{i}}=M_{0}(x_{1},\dots ,x_{n})}

[2]

lim

p →

∞

M

p

(

x

1

,

…

,

x

n

)

=

lim

p →

∞

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

=

x

1

lim

p →

∞

(

∑

i =

1

n

w

i

(

x

i

x

1

)

p

)

1

/

p

=

x

1

=

M

∞

(

x

1

,

…

,

x

n

)

.

{\displaystyle {\begin{aligned}\lim _{p\to \infty }M_{p}(x_{1},\dots ,x_{n})&=\lim _{p\to \infty }\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\\&=x_{1}\lim _{p\to \infty }\left(\sum _{i=1}^{n}w_{i}\left({\frac {x_{i}}{x_{1}}}\right)^{p}\right)^{1/p}\\&=x_{1}=M_{\infty }(x_{1},\dots ,x_{n}).\end{aligned}}}

The formula for

M

−

∞

{\displaystyle M_{-\infty }}

M

−

∞

(

x

1

,

…

,

x

n

)

=

1

M

∞

(

1

/

x

1

,

…

,

1

/

x

n

)

=

x

n

.

{\displaystyle M_{-\infty }(x_{1},\dots ,x_{n})={\frac {1}{M_{\infty }(1/x_{1},\dots ,1/x_{n})}}=x_{n}.}

Properties

[ edit ]

Let

x

1

,

…

,

x

n

{\displaystyle x_{1},\dots ,x_{n}}

[1]

min

(

x

1

,

…

,

x

n

)

≤

M

p

(

x

1

,

…

,

x

n

)

≤

max

(

x

1

,

…

,

x

n

)

{\displaystyle \min(x_{1},\dots ,x_{n})\leq M_{p}(x_{1},\dots ,x_{n})\leq \max(x_{1},\dots ,x_{n})}

Each generalized mean always lies between the smallest and largest of the x

M

p

(

x

1

,

…

,

x

n

)

=

M

p

(

P (

x

1

,

…

,

x

n

)

)

{\displaystyle M_{p}(x_{1},\dots ,x_{n})=M_{p}(P(x_{1},\dots ,x_{n}))}

P

{\displaystyle P}

Each generalized mean is a symmetric function of its arguments; permuting the arguments of a generalized mean does not change its value.

M

p

(

b

x

1

,

…

,

b

x

n

)

=

b ⋅

M

p

(

x

1

,

…

,

x

n

)

{\displaystyle M_{p}(bx_{1},\dots ,bx_{n})=b\cdot M_{p}(x_{1},\dots ,x_{n})}

Like most

means , the generalized mean is a

homogeneous function of its arguments

x 1 x n . That is, if

b is a positive real number, then the generalized mean with exponent

p of the numbers

b ⋅

x

1

,

…

,

b ⋅

x

n

{\displaystyle b\cdot x_{1},\dots ,b\cdot x_{n}}

is equal to

b times the generalized mean of the numbers

x 1 x n .

M

p

(

x

1

,

…

,

x

n ⋅

k

)

=

M

p

[

M

p

(

x

1

,

…

,

x

k

)

,

M

p

(

x

k +

1

,

…

,

x

2 ⋅

k

)

,

…

,

M

p

(

x

(

n −

1 )

⋅

k +

1

,

…

,

x

n ⋅

k

)

]

{\displaystyle M_{p}(x_{1},\dots ,x_{n\cdot k})=M_{p}\left[M_{p}(x_{1},\dots ,x_{k}),M_{p}(x_{k+1},\dots ,x_{2\cdot k}),\dots ,M_{p}(x_{(n-1)\cdot k+1},\dots ,x_{n\cdot k})\right]}

Generalized mean inequality

[ edit ] Geometric proof without words that max (a b root mean square (RMS )or quadratic mean (QM arithmetic mean (AM geometric mean (GM harmonic mean (HM min (a b a b [note 1]

In general, if p q

M

p

(

x

1

,

…

,

x

n

)

≤

M

q

(

x

1

,

…

,

x

n

)

{\displaystyle M_{p}(x_{1},\dots ,x_{n})\leq M_{q}(x_{1},\dots ,x_{n})}

x 1 x 2 x n

The inequality is true for real values of p q

It follows from the fact that, for all real p

∂

∂

p

M

p

(

x

1

,

…

,

x

n

)

≥

0

{\displaystyle {\frac {\partial }{\partial p}}M_{p}(x_{1},\dots ,x_{n})\geq 0}

Jensen's inequality .

In particular, for p in {−1, 0, 1} , the generalized mean inequality implies the Pythagorean means inequality as well as the inequality of arithmetic and geometric means .

Proof of the weighted inequality

[ edit ]

We will prove the weighted power mean inequality. For the purpose of the proof we will assume the following without loss of generality:

w

i

∈

[

0

,

1 ]

∑

i =

1

n

w

i

=

1

{\displaystyle {\begin{aligned}w_{i}\in [0,1]\\\sum _{i=1}^{n}w_{i}=1\end{aligned}}}

The proof for unweighted power means can be easily obtained by substituting w i n

Equivalence of inequalities between means of opposite signs

[ edit ]

Suppose an average between power means with exponents p q

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

≥

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\geq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}}

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

≥

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}{\frac {w_{i}}{x_{i}^{p}}}\right)^{1/p}\geq \left(\sum _{i=1}^{n}{\frac {w_{i}}{x_{i}^{q}}}\right)^{1/q}}

We raise both sides to the power of −1 (strictly decreasing function in positive reals):

(

∑

i =

1

n

w

i

x

i

−

p

)

−

1

/

p

=

(

1

∑

i =

1

n

w

i

1

x

i

p

)

1

/

p

≤

(

1

∑

i =

1

n

w

i

1

x

i

q

)

1

/

q

=

(

∑

i =

1

n

w

i

x

i

−

q

)

−

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{-p}\right)^{-1/p}=\left({\frac {1}{\sum _{i=1}^{n}w_{i}{\frac {1}{x_{i}^{p}}}}}\right)^{1/p}\leq \left({\frac {1}{\sum _{i=1}^{n}w_{i}{\frac {1}{x_{i}^{q}}}}}\right)^{1/q}=\left(\sum _{i=1}^{n}w_{i}x_{i}^{-q}\right)^{-1/q}}

We get the inequality for means with exponents −p and −q , and we can use the same reasoning backwards, thus proving the inequalities to be equivalent, which will be used in some of the later proofs.

Geometric mean

[ edit ]

For any q

(

∑

i =

1

n

w

i

x

i

−

q

)

−

1

/

q

≤

∏

i =

1

n

x

i

w

i

≤

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

.

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{-q}\right)^{-1/q}\leq \prod _{i=1}^{n}x_{i}^{w_{i}}\leq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}.}

The proof follows from Jensen's inequality , making use of the fact the logarithm is concave:

log

∏

i =

1

n

x

i

w

i

=

∑

i =

1

n

w

i

log

x

i

≤

log

∑

i =

1

n

w

i

x

i

.

{\displaystyle \log \prod _{i=1}^{n}x_{i}^{w_{i}}=\sum _{i=1}^{n}w_{i}\log x_{i}\leq \log \sum _{i=1}^{n}w_{i}x_{i}.}

By applying the exponential function to both sides and observing that as a strictly increasing function it preserves the sign of the inequality, we get

∏

i =

1

n

x

i

w

i

≤

∑

i =

1

n

w

i

x

i

.

{\displaystyle \prod _{i=1}^{n}x_{i}^{w_{i}}\leq \sum _{i=1}^{n}w_{i}x_{i}.}

Taking q x i

∏

i =

1

n

x

i

q

⋅

w

i

≤

∑

i =

1

n

w

i

x

i

q

∏

i =

1

n

x

i

w

i

≤

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

.

{\displaystyle {\begin{aligned}&\prod _{i=1}^{n}x_{i}^{q{\cdot }w_{i}}\leq \sum _{i=1}^{n}w_{i}x_{i}^{q}\\&\prod _{i=1}^{n}x_{i}^{w_{i}}\leq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}.\end{aligned}}}

Thus, we are done for the inequality with positive q

∏

i =

1

n

x

i

−

q

⋅

w

i

≤

∑

i =

1

n

w

i

x

i

−

q

.

{\displaystyle \prod _{i=1}^{n}x_{i}^{-q{\cdot }w_{i}}\leq \sum _{i=1}^{n}w_{i}x_{i}^{-q}.}

Of course, taking each side to the power of a negative number -1/q swaps the direction of the inequality.

∏

i =

1

n

x

i

w

i

≥

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

.

{\displaystyle \prod _{i=1}^{n}x_{i}^{w_{i}}\geq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}.}

Inequality between any two power means

[ edit ]

We are to prove that for any p q

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

≤

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\leq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}}

if p q

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

≤

∏

i =

1

n

x

i

w

i

≤

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\leq \prod _{i=1}^{n}x_{i}^{w_{i}}\leq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}}

The proof for positive p q f R + → R +

f (

x )

=

x

q p

{\displaystyle f(x )=x^{\frac {q}{p}}}

f

f ″

(

x )

=

(

q p

)

(

q p

−

1

)

x

q p

−

2

{\displaystyle f''(x )=\left({\frac {q}{p}}\right)\left({\frac {q}{p}}-1\right)x^{{\frac {q}{p}}-2}}

f q p f

Using this, and the Jensen's inequality we get:

f

(

∑

i =

1

n

w

i

x

i

p

)

≤

∑

i =

1

n

w

i

f (

x

i

p

)

(

∑

i =

1

n

w

i

x

i

p

)

q

/

p

≤

∑

i =

1

n

w

i

x

i

q

{\displaystyle {\begin{aligned}f\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)&\leq \sum _{i=1}^{n}w_{i}f(x_{i}^{p})\\[3pt]\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{q/p}&\leq \sum _{i=1}^{n}w_{i}x_{i}^{q}\end{aligned}}}

1/q (an increasing function, since 1/q is positive) we get the inequality which was to be proven:

(

∑

i =

1

n

w

i

x

i

p

)

1

/

p

≤

(

∑

i =

1

n

w

i

x

i

q

)

1

/

q

{\displaystyle \left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\leq \left(\sum _{i=1}^{n}w_{i}x_{i}^{q}\right)^{1/q}}

Using the previously shown equivalence we can prove the inequality for negative p q −q and −p , respectively.

Generalized f

[ edit ]

The power mean could be generalized further to the generalized f :

M

f

(

x

1

,

…

,

x

n

)

=

f

−

1

(

1 n

⋅

∑

i =

1

n

f (

x

i

)

)

{\displaystyle M_{f}(x_{1},\dots ,x_{n})=f^{-1}\left({{\frac {1}{n}}\cdot \sum _{i=1}^{n}{f(x_{i})}}\right)}

This covers the geometric mean without using a limit with f x x f x x p [3]

Applications

[ edit ]

Signal processing

[ edit ]

A power mean serves a non-linear moving average which is shifted towards small signal values for small p p moving arithmetic mean called smooth one can implement a moving power mean according to the following Haskell code.

powerSmooth :: Floating a => ([ a ] -> [ a ]) -> a -> [ a ] -> [ a ]

powerSmooth smooth p = map ( ** recip p ) . smooth . map ( ** p )

See also

[ edit ]

Average

Heronian mean

Inequality of arithmetic and geometric means

Lehmer mean – also a mean related to powers

Minkowski distance

Quasi-arithmetic mean – another name for the generalized f-mean mentioned above

Root mean square

Notes

[ edit ]

^ If AC = a b AM of a b r Pythagoras' theorem , QC² = QO² + OC² ∴ QC = √QO² + OC² = QM OC² − OG² = GM similar triangles , HC / GC = GC / OC ∴ HC = GC² / OC = HM

References

[ edit ]

^ a b Sýkora, Stanislav (2009). "Mathematical means and averages: basic properties". Stan's Library . III . Castano Primo, Italy: Stan's Library. doi :10.3247/SL3Math09.001 .

^ a b c P. S. Bullen: Handbook of Means and Their Inequalities . Dordrecht, Netherlands: Kluwer, 2003, pp. 175-177

^ a b de Carvalho, Miguel (2016). "Mean, what do you Mean?" . The American Statistician 70 3 ): 764‒776. doi :10.1080/00031305.2016.1148632 . hdl :20.500.11820/fd7a8991-69a4-4fe5-876f-abcd2957a88c

^ Weisstein, Eric W. "Power Mean" . MathWorld

^ Thompson, Sylvanus P. (1965). Calculus Made Easy ISBN 9781349004874 . Retrieved 5 July 2020 . [permanent dead link

^ Jones, Alan R. (2018). Probability, Statistics and Other Frightening Stuff ISBN 9781351661386 . Retrieved 5 July 2020 .

^ Handbook of Means and Their Inequalities (Mathematics and Its Applications) .

Further reading

[ edit ]

Bullen, P. S. (2003). "Chapter III - The Power Means". Handbook of Means and Their Inequalities . Dordrecht, Netherlands: Kluwer. pp. 175–265.

External links

[ edit ] R e t r i e v e d f r o m " https://en.wikipedia.org/w/index.php?title=Generalized_mean&oldid=1225552764 " C a t e g o r i e s : ● M e a n s ● I n e q u a l i t i e s H i d d e n c a t e g o r i e s : ● A l l a r t i c l e s w i t h d e a d e x t e r n a l l i n k s ● A r t i c l e s w i t h d e a d e x t e r n a l l i n k s f r o m M a y 2 0 2 4 ● A r t i c l e s w i t h p e r m a n e n t l y d e a d e x t e r n a l l i n k s ● A r t i c l e s w i t h s h o r t d e s c r i p t i o n ● S h o r t d e s c r i p t i o n m a t c h e s W i k i d a t a ● A r t i c l e s n e e d i n g a d d i t i o n a l r e f e r e n c e s f r o m J u n e 2 0 2 0 ● A l l a r t i c l e s n e e d i n g a d d i t i o n a l r e f e r e n c e s ● A r t i c l e s w i t h e x a m p l e H a s k e l l c o d e

● T h i s p a g e w a s l a s t e d i t e d o n 2 5 M a y 2 0 2 4 , a t 0 5 : 3 1 ( U T C ) . ● T e x t i s a v a i l a b l e u n d e r t h e C r e a t i v e C o m m o n s A t t r i b u t i o n - S h a r e A l i k e L i c e n s e 4 . 0 ;

a d d i t i o n a l t e r m s m a y a p p l y . B y u s i n g t h i s s i t e , y o u a g r e e t o t h e T e r m s o f U s e a n d P r i v a c y P o l i c y . W i k i p e d i a ® i s a r e g i s t e r e d t r a d e m a r k o f t h e W i k i m e d i a F o u n d a t i o n , I n c . , a n o n - p r o f i t o r g a n i z a t i o n . ● P r i v a c y p o l i c y ● A b o u t W i k i p e d i a ● D i s c l a i m e r s ● C o n t a c t W i k i p e d i a ● C o d e o f C o n d u c t ● D e v e l o p e r s ● S t a t i s t i c s ● C o o k i e s t a t e m e n t ● M o b i l e v i e w

.

.

![{\displaystyle M_{0}(x_{1},\dots ,x_{n})=\lim _{p\to 0}M_{p}(x_{1},\dots ,x_{n})={\sqrt[{n}]{x_{1}\cdot \dots \cdot x_{n}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/61bc811b24285b8134ca93a3cbefde3c13262b6f)

![{\displaystyle M_{3}(x_{1},\dots ,x_{n})={\sqrt[{3}]{\frac {x_{1}^{3}+\dots +x_{n}^{3}}{n}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dea033d3d469a5602c10b50b74faf83cd1bc1043)

(geometric mean)

(geometric mean)

![{\displaystyle w_{i}\in [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/957caad78e4ee7db64d0a3f25802a9c7c3ac140e)

![{\displaystyle M_{p}(x_{1},\dots ,x_{n})=\exp {\left(\ln {\left[\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{1/p}\right]}\right)}=\exp {\left({\frac {\ln {\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)}}{p}}\right)}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9e70d68040177c8c1152830e6a8873280d4c3a9d)

and

and

.

. , where

, where  is a permutation operator.

is a permutation operator. .

. is equal to b times the generalized mean of the numbers x1, ..., xn.

is equal to b times the generalized mean of the numbers x1, ..., xn.![{\displaystyle M_{p}(x_{1},\dots ,x_{n\cdot k})=M_{p}\left[M_{p}(x_{1},\dots ,x_{k}),M_{p}(x_{k+1},\dots ,x_{2\cdot k}),\dots ,M_{p}(x_{(n-1)\cdot k+1},\dots ,x_{n\cdot k})\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/123bdbdd17dee6edcaf0853d80b4fb9d949a5cea) .

.

![{\displaystyle {\begin{aligned}w_{i}\in [0,1]\\\sum _{i=1}^{n}w_{i}=1\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b74495b015da1fdf962f200e45159932bbc89b29)

![{\displaystyle {\begin{aligned}f\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)&\leq \sum _{i=1}^{n}w_{i}f(x_{i}^{p})\\[3pt]\left(\sum _{i=1}^{n}w_{i}x_{i}^{p}\right)^{q/p}&\leq \sum _{i=1}^{n}w_{i}x_{i}^{q}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/84e701eab0b85f0ee34358e4add1c3b523230d5c)